Iustin Pop: Raspberry PI OS: upgrading and cross-grading

One of the downsides of running Raspberry PI OS is the fact that - not

having the resources of pure Debian - upgrades are not recommended,

and cross-grades (migrating between Upgrading

The recently announced release based on Debian Bookworm

here

says:

armhf and arm64) is not even

mentioned. Is this really true? It is, after all a Debian-based

system, so it should in theory be doable. Let s try!

Upgrading

The recently announced release based on Debian Bookworm

here

says:

We have always said that for a major version upgrade, you should

re-image your SD card and start again with a clean image. In the

past, we have suggested procedures for updating an existing image to

the new version, but always with the caveat that we do not recommend

it, and you do this at your own risk.

This time, because the changes to the underlying architecture are so

significant, we are not suggesting any procedure for upgrading a

Bullseye image to Bookworm; any attempt to do this will almost

certainly end up with a non-booting desktop and data loss. The only

way to get Bookworm is either to create an SD card using Raspberry

Pi Imager, or to download and flash a Bookworm image from here with

your tool of choice.

Which means, it s time to actually try it turns out it s actually

trivial, if you use RPIs as headless servers. I had only three issues:

- if using an

initrd, the new initrd-building scripts/hooks are

looking for some binaries in /usr/bin, and not in /bin;

solution: install manually the usrmerge package, and then re-run

dpkg --configure -a;

- also if using an initrd, the scripts are looking for the kernel

config file in

/boot/config-$(uname -r), and the raspberry pi

kernel package doesn t provide this; workaround: modprobe configs && zcat /proc/config.gz > /boot/config-$(uname -r);

- and finally, on normal RPI systems, that don t use manual

configurations of interfaces in

/etc/network/interface, migrating

from the previous dhcpcd to NetworkManager will break network

connectivity, and require you to log in locally and fix things.

I expect most people to hit only the 3rd, and almost no-one to use

initrd on raspberry

pi. But, overall, aside

from these two issues and a couple of cosmetic ones (login.defs

being rewritten from scratch and showing a baffling diff, for

example), it was easy.

Is it worth doing? Definitely. Had no data loss, and no non-booting

system.

Cross-grading (32 bit to 64 bit userland)

This one is actually painful. Internet searches go from it s possible, I think to it s definitely not worth trying . Examples:

- rsync files over after reinstall

- just install on a new sdcard

- confusion about kernel vs userland

- don t do it, not recommended

- stackexchange post without answers

Aside from these, there are a gazillion other posts about switching

the kernel to 64 bit. And that s worth doing on its own, but it s

only half the way.

So, armed with two different systems - a RPI4 4GB and a RPI Zero W2 -

I tried to do this. And while it can be done, it takes many hours -

first system was about 6 hours, second the same, and a third RPI4

probably took ~3 hours only since I knew the problematic issues.

So, what are the steps? Basically:

- install

devscripts, since you will need dget

- enable new architecture in dpkg:

dpkg --add-architecture arm64

- switch over apt sources to include the 64 bit repos, which are

different than the 32 bit ones (Raspberry PI OS did a migration

here; normally a single repository has all architectures, of course)

- downgrade all custom rpi packages/libraries to the standard

bookworm/bullseye version, since dpkg won t usually allow a single

library package to have different versions (I think it s possible to

override, but I didn t bother)

- install libc for the arm64 arch (this takes some effort, it s

actually a set of 3-4 packages)

- once the above is done, install

whiptail:amd64 and rejoice at

running a 64-bit binary!

- then painfully go through sets of packages and migrate the set to

arm64:

- sometimes this work via apt, sometimes you ll need to use

dget

and dpkg -i

- make sure you download both the

armhf and arm64 versions

before doing dpkg -i, since you ll need to rollback some

installs

- at one point, you ll be able to switch over

dpkg and apt to

arm64, at which point the default architecture flips over; from

here, if you ve done it at the right moment, it becomes very easy;

you ll probably need an apt install --fix-broken, though, at

first

- and then, finish by replacing all packages with

arm64 versions

- and then,

dpkg --remove-architecture armhf, reboot, and profit!

But it s tears and blood to get to that point

Pain point 1: RPI custom versions of packages

Since the 32bit armhf architecture is a bit weird - having many

variations - it turns out that raspberry pi OS has many packages that

are very slightly tweaked to disable a compilation flag or work around

build/test failures, or whatnot. Since we talk here about 64-bit

capable processors, almost none of these are needed, but they do make

life harder since the 64 bit version doesn t have those overrides.

So what is needed would be to say downgrade all armhf packages to the

version in debian upstream repo , but I couldn t find the right apt

pinning incantation to do that. So what I did was to remove the 32bit

repos, then use apt-show-versions to see which packages have

versions that are no longer in any repo, then downgrade them.

There s a further, minor, complication that there were about 3-4

packages with same version but different hash (!), which simply needed

apt install --reinstall, I think.

Pain point 2: architecture independent packages

There is one very big issue with dpkg in all this story, and the one

that makes things very problematic: while you can have a library

package installed multiple times for different architectures, as the

files live in different paths, a non-library package can only be

installed once (usually). For binary packages (arch:any), that is

fine. But architecture-independent packages (arch:all) are

problematic since usually they depend on a binary package, but they

always depend on the default architecture version!

Hrmm, and I just realise I don t have logs from this, so I m only ~80% confident. But basically:

vim-solarized (arch:all) depends on vim (arch:any)- if you replace vim armhf with vim arm64, this will break

vim-solarized, until the default architecture becomes arm64

So you need to keep track of which packages apt will de-install, for

later re-installation.

It is possible that Multi-Arch: foreign solves this, per the debian wiki which says:

Note that even though Architecture: all and Multi-Arch: foreign

may look like similar concepts, they are not. The former means that

the same binary package can be installed on different

architectures. Yet, after installation such packages are treated as

if they were native architecture (by definition the architecture

of the dpkg package) packages. Thus Architecture: all packages

cannot satisfy dependencies from other architectures without being

marked Multi-Arch foreign.

It also has warnings about how to properly use this. But, in general,

not many packages have it, so it is a problem.

Pain point 3: remove + install vs overwrite

It seems that depending on how the solver computes a solution, when

migrating a package from 32 to 64 bit, it can choose either to:

- overwrite in place the package (akin to

dpkg -i)

- remove + install later

The former is OK, the later is not. Or, actually, it might be that apt

never can do this, for example (edited for brevity):

# apt install systemd:arm64 --no-install-recommends

The following packages will be REMOVED:

systemd

The following NEW packages will be installed:

systemd:arm64

0 upgraded, 1 newly installed, 1 to remove and 35 not upgraded.

Do you want to continue? [Y/n] y

dpkg: systemd: dependency problems, but removing anyway as you requested:

systemd-sysv depends on systemd.

Removing systemd (247.3-7+deb11u2) ...

systemd is the active init system, please switch to another before removing systemd.

dpkg: error processing package systemd (--remove):

installed systemd package pre-removal script subprocess returned error exit status 1

dpkg: too many errors, stopping

Errors were encountered while processing:

systemd

Processing was halted because there were too many errors.

But at the same time, overwrite in place is all good - via dpkg -i

from /var/cache/apt/archives.

In this case it manifested via a prerm script, in other cases is

manifests via dependencies that are no longer satisfied for packages

that can t be removed, etc. etc. So you will have to resort to dpkg -i a lot.

Pain point 4: lib- packages that are not lib

During the whole process, it is very tempting to just go ahead and

install the corresponding arm64 package for all armhf lib package,

in one go, since these can coexist.

Well, this simple plan is complicated by the fact that some packages

are named libfoo-bar, but are actual holding (e.g.) the bar binary

for the libfoo package. Examples:

libmagic-mgc contains /usr/lib/file/magic.mgc, which conflicts

between the 32 and 64 bit versions; of course, it s the exact same

file, so this should be an arch:all package, but libpam-modules-bin and liblockfile-bin actually contain binaries

(per the -bin suffix)

It s possible to work around all this, but it changes a 1 minute:

# apt install $(dpkg -i grep ^ii awk ' print $2 ' grep :amrhf sed -e 's/:armhf/:arm64')

into a 10-20 minutes fight with packages (like most other steps).

Is it worth doing?

Compared to the simple bullseye bookworm upgrade, I m not sure about

this. The result? Yes, definitely, the system feels - weirdly - much

more responsive, logged in over SSH. I guess the arm64 base

architecture has some more efficient ops than the lowest denominator

armhf , so to say (e.g. there was in the 32 bit version some

rpi-custom package with string ops), and thus migrating to 64 bit

makes more things faster , but this is subjective so it might be

actually not true.

But from the point of view of the effort? Unless you like to play with

dpkg and apt, and understand how these work and break, I d rather say,

migrate to ansible and automate the deployment. It s doable, sure, and

by the third system, I got this nailed down pretty well, but it was a

lot of time spent.

The good aspect is that I did 3 migrations:

- rpi zero w2: bullseye 32 bit to 64 bit, then bullseye to bookworm

- rpi 4: bullseye to bookworm, then bookworm 32bit to 64 bit

- same, again, for a more important system

And all three worked well and no data loss. But I m really glad I

have this behind me, I probably wouldn t do a fourth system, even if

forced

And now, waiting for the RPI 5 to be available See you!

initrd, the new initrd-building scripts/hooks are

looking for some binaries in /usr/bin, and not in /bin;

solution: install manually the usrmerge package, and then re-run

dpkg --configure -a;/boot/config-$(uname -r), and the raspberry pi

kernel package doesn t provide this; workaround: modprobe configs && zcat /proc/config.gz > /boot/config-$(uname -r);/etc/network/interface, migrating

from the previous dhcpcd to NetworkManager will break network

connectivity, and require you to log in locally and fix things.- rsync files over after reinstall

- just install on a new sdcard

- confusion about kernel vs userland

- don t do it, not recommended

- stackexchange post without answers

- install

devscripts, since you will needdget - enable new architecture in dpkg:

dpkg --add-architecture arm64 - switch over apt sources to include the 64 bit repos, which are different than the 32 bit ones (Raspberry PI OS did a migration here; normally a single repository has all architectures, of course)

- downgrade all custom rpi packages/libraries to the standard bookworm/bullseye version, since dpkg won t usually allow a single library package to have different versions (I think it s possible to override, but I didn t bother)

- install libc for the arm64 arch (this takes some effort, it s actually a set of 3-4 packages)

- once the above is done, install

whiptail:amd64and rejoice at running a 64-bit binary! - then painfully go through sets of packages and migrate the set to

arm64:

- sometimes this work via apt, sometimes you ll need to use

dgetanddpkg -i - make sure you download both the

armhfandarm64versions before doingdpkg -i, since you ll need to rollback some installs

- sometimes this work via apt, sometimes you ll need to use

- at one point, you ll be able to switch over

dpkgandaptto arm64, at which point the default architecture flips over; from here, if you ve done it at the right moment, it becomes very easy; you ll probably need anapt install --fix-broken, though, at first - and then, finish by replacing all packages with

arm64versions - and then,

dpkg --remove-architecture armhf, reboot, and profit!

Pain point 1: RPI custom versions of packages

Since the 32bit armhf architecture is a bit weird - having many

variations - it turns out that raspberry pi OS has many packages that

are very slightly tweaked to disable a compilation flag or work around

build/test failures, or whatnot. Since we talk here about 64-bit

capable processors, almost none of these are needed, but they do make

life harder since the 64 bit version doesn t have those overrides.

So what is needed would be to say downgrade all armhf packages to the

version in debian upstream repo , but I couldn t find the right apt

pinning incantation to do that. So what I did was to remove the 32bit

repos, then use apt-show-versions to see which packages have

versions that are no longer in any repo, then downgrade them.

There s a further, minor, complication that there were about 3-4

packages with same version but different hash (!), which simply needed

apt install --reinstall, I think.

Pain point 2: architecture independent packages

There is one very big issue with dpkg in all this story, and the one

that makes things very problematic: while you can have a library

package installed multiple times for different architectures, as the

files live in different paths, a non-library package can only be

installed once (usually). For binary packages (arch:any), that is

fine. But architecture-independent packages (arch:all) are

problematic since usually they depend on a binary package, but they

always depend on the default architecture version!

Hrmm, and I just realise I don t have logs from this, so I m only ~80% confident. But basically:

vim-solarized (arch:all) depends on vim (arch:any)- if you replace vim armhf with vim arm64, this will break

vim-solarized, until the default architecture becomes arm64

So you need to keep track of which packages apt will de-install, for

later re-installation.

It is possible that Multi-Arch: foreign solves this, per the debian wiki which says:

Note that even though Architecture: all and Multi-Arch: foreign

may look like similar concepts, they are not. The former means that

the same binary package can be installed on different

architectures. Yet, after installation such packages are treated as

if they were native architecture (by definition the architecture

of the dpkg package) packages. Thus Architecture: all packages

cannot satisfy dependencies from other architectures without being

marked Multi-Arch foreign.

It also has warnings about how to properly use this. But, in general,

not many packages have it, so it is a problem.

Pain point 3: remove + install vs overwrite

It seems that depending on how the solver computes a solution, when

migrating a package from 32 to 64 bit, it can choose either to:

- overwrite in place the package (akin to

dpkg -i)

- remove + install later

The former is OK, the later is not. Or, actually, it might be that apt

never can do this, for example (edited for brevity):

# apt install systemd:arm64 --no-install-recommends

The following packages will be REMOVED:

systemd

The following NEW packages will be installed:

systemd:arm64

0 upgraded, 1 newly installed, 1 to remove and 35 not upgraded.

Do you want to continue? [Y/n] y

dpkg: systemd: dependency problems, but removing anyway as you requested:

systemd-sysv depends on systemd.

Removing systemd (247.3-7+deb11u2) ...

systemd is the active init system, please switch to another before removing systemd.

dpkg: error processing package systemd (--remove):

installed systemd package pre-removal script subprocess returned error exit status 1

dpkg: too many errors, stopping

Errors were encountered while processing:

systemd

Processing was halted because there were too many errors.

But at the same time, overwrite in place is all good - via dpkg -i

from /var/cache/apt/archives.

In this case it manifested via a prerm script, in other cases is

manifests via dependencies that are no longer satisfied for packages

that can t be removed, etc. etc. So you will have to resort to dpkg -i a lot.

Pain point 4: lib- packages that are not lib

During the whole process, it is very tempting to just go ahead and

install the corresponding arm64 package for all armhf lib package,

in one go, since these can coexist.

Well, this simple plan is complicated by the fact that some packages

are named libfoo-bar, but are actual holding (e.g.) the bar binary

for the libfoo package. Examples:

libmagic-mgc contains /usr/lib/file/magic.mgc, which conflicts

between the 32 and 64 bit versions; of course, it s the exact same

file, so this should be an arch:all package, but libpam-modules-bin and liblockfile-bin actually contain binaries

(per the -bin suffix)

It s possible to work around all this, but it changes a 1 minute:

# apt install $(dpkg -i grep ^ii awk ' print $2 ' grep :amrhf sed -e 's/:armhf/:arm64')

into a 10-20 minutes fight with packages (like most other steps).

Is it worth doing?

Compared to the simple bullseye bookworm upgrade, I m not sure about

this. The result? Yes, definitely, the system feels - weirdly - much

more responsive, logged in over SSH. I guess the arm64 base

architecture has some more efficient ops than the lowest denominator

armhf , so to say (e.g. there was in the 32 bit version some

rpi-custom package with string ops), and thus migrating to 64 bit

makes more things faster , but this is subjective so it might be

actually not true.

But from the point of view of the effort? Unless you like to play with

dpkg and apt, and understand how these work and break, I d rather say,

migrate to ansible and automate the deployment. It s doable, sure, and

by the third system, I got this nailed down pretty well, but it was a

lot of time spent.

The good aspect is that I did 3 migrations:

- rpi zero w2: bullseye 32 bit to 64 bit, then bullseye to bookworm

- rpi 4: bullseye to bookworm, then bookworm 32bit to 64 bit

- same, again, for a more important system

And all three worked well and no data loss. But I m really glad I

have this behind me, I probably wouldn t do a fourth system, even if

forced

And now, waiting for the RPI 5 to be available See you!

arch:any), that is

fine. But architecture-independent packages (arch:all) are

problematic since usually they depend on a binary package, but they

always depend on the default architecture version!

Hrmm, and I just realise I don t have logs from this, so I m only ~80% confident. But basically:

vim-solarized(arch:all) depends onvim(arch:any)- if you replace vim armhf with vim arm64, this will break

vim-solarized, until the default architecture becomesarm64

Multi-Arch: foreign solves this, per the debian wiki which says:

Note that even thoughIt also has warnings about how to properly use this. But, in general, not many packages have it, so it is a problem.Architecture: allandMulti-Arch: foreignmay look like similar concepts, they are not. The former means that the same binary package can be installed on different architectures. Yet, after installation such packages are treated as if they were native architecture (by definition the architecture of the dpkg package) packages. Thus Architecture: all packages cannot satisfy dependencies from other architectures without being marked Multi-Arch foreign.

Pain point 3: remove + install vs overwrite

It seems that depending on how the solver computes a solution, when

migrating a package from 32 to 64 bit, it can choose either to:

- overwrite in place the package (akin to

dpkg -i)

- remove + install later

The former is OK, the later is not. Or, actually, it might be that apt

never can do this, for example (edited for brevity):

# apt install systemd:arm64 --no-install-recommends

The following packages will be REMOVED:

systemd

The following NEW packages will be installed:

systemd:arm64

0 upgraded, 1 newly installed, 1 to remove and 35 not upgraded.

Do you want to continue? [Y/n] y

dpkg: systemd: dependency problems, but removing anyway as you requested:

systemd-sysv depends on systemd.

Removing systemd (247.3-7+deb11u2) ...

systemd is the active init system, please switch to another before removing systemd.

dpkg: error processing package systemd (--remove):

installed systemd package pre-removal script subprocess returned error exit status 1

dpkg: too many errors, stopping

Errors were encountered while processing:

systemd

Processing was halted because there were too many errors.

But at the same time, overwrite in place is all good - via dpkg -i

from /var/cache/apt/archives.

In this case it manifested via a prerm script, in other cases is

manifests via dependencies that are no longer satisfied for packages

that can t be removed, etc. etc. So you will have to resort to dpkg -i a lot.

Pain point 4: lib- packages that are not lib

During the whole process, it is very tempting to just go ahead and

install the corresponding arm64 package for all armhf lib package,

in one go, since these can coexist.

Well, this simple plan is complicated by the fact that some packages

are named libfoo-bar, but are actual holding (e.g.) the bar binary

for the libfoo package. Examples:

libmagic-mgc contains /usr/lib/file/magic.mgc, which conflicts

between the 32 and 64 bit versions; of course, it s the exact same

file, so this should be an arch:all package, but libpam-modules-bin and liblockfile-bin actually contain binaries

(per the -bin suffix)

It s possible to work around all this, but it changes a 1 minute:

# apt install $(dpkg -i grep ^ii awk ' print $2 ' grep :amrhf sed -e 's/:armhf/:arm64')

into a 10-20 minutes fight with packages (like most other steps).

Is it worth doing?

Compared to the simple bullseye bookworm upgrade, I m not sure about

this. The result? Yes, definitely, the system feels - weirdly - much

more responsive, logged in over SSH. I guess the arm64 base

architecture has some more efficient ops than the lowest denominator

armhf , so to say (e.g. there was in the 32 bit version some

rpi-custom package with string ops), and thus migrating to 64 bit

makes more things faster , but this is subjective so it might be

actually not true.

But from the point of view of the effort? Unless you like to play with

dpkg and apt, and understand how these work and break, I d rather say,

migrate to ansible and automate the deployment. It s doable, sure, and

by the third system, I got this nailed down pretty well, but it was a

lot of time spent.

The good aspect is that I did 3 migrations:

- rpi zero w2: bullseye 32 bit to 64 bit, then bullseye to bookworm

- rpi 4: bullseye to bookworm, then bookworm 32bit to 64 bit

- same, again, for a more important system

And all three worked well and no data loss. But I m really glad I

have this behind me, I probably wouldn t do a fourth system, even if

forced

And now, waiting for the RPI 5 to be available See you!

dpkg -i)# apt install systemd:arm64 --no-install-recommends

The following packages will be REMOVED:

systemd

The following NEW packages will be installed:

systemd:arm64

0 upgraded, 1 newly installed, 1 to remove and 35 not upgraded.

Do you want to continue? [Y/n] y

dpkg: systemd: dependency problems, but removing anyway as you requested:

systemd-sysv depends on systemd.

Removing systemd (247.3-7+deb11u2) ...

systemd is the active init system, please switch to another before removing systemd.

dpkg: error processing package systemd (--remove):

installed systemd package pre-removal script subprocess returned error exit status 1

dpkg: too many errors, stopping

Errors were encountered while processing:

systemd

Processing was halted because there were too many errors.lib package,

in one go, since these can coexist.

Well, this simple plan is complicated by the fact that some packages

are named libfoo-bar, but are actual holding (e.g.) the bar binary

for the libfoo package. Examples:

libmagic-mgccontains/usr/lib/file/magic.mgc, which conflicts between the 32 and 64 bit versions; of course, it s the exact same file, so this should be anarch:allpackage, butlibpam-modules-binandliblockfile-binactually contain binaries (per the-binsuffix)

# apt install $(dpkg -i grep ^ii awk ' print $2 ' grep :amrhf sed -e 's/:armhf/:arm64') My previously mentioned

My previously mentioned

Physical keyboard found.

Physical keyboard found.

I very, very nearly didn t make it to DebConf this year, I had a bad cold/flu for a few days before I left, and after a negative covid-19 test just minutes before my flight, I decided to take the plunge and travel.

This is just everything in chronological order, more or less, it s the only way I could write it.

I very, very nearly didn t make it to DebConf this year, I had a bad cold/flu for a few days before I left, and after a negative covid-19 test just minutes before my flight, I decided to take the plunge and travel.

This is just everything in chronological order, more or less, it s the only way I could write it.

If you got one of these Cheese & Wine bags from DebConf, that s from the South African local group!

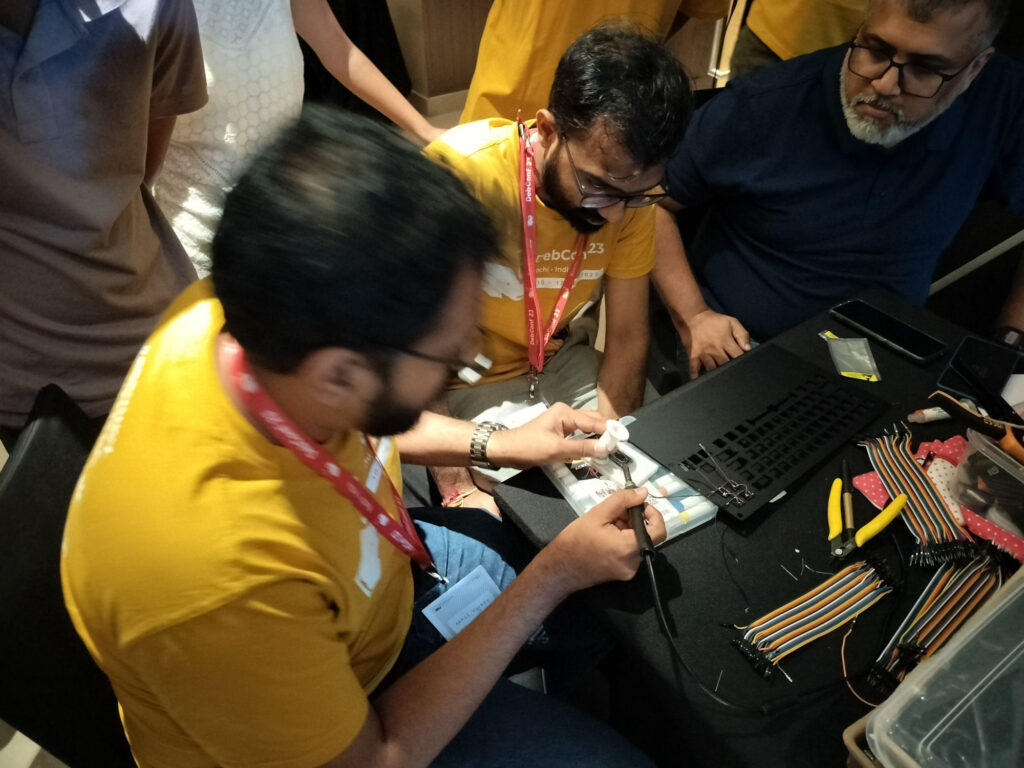

If you got one of these Cheese & Wine bags from DebConf, that s from the South African local group! Some hopefully harmless soldering.

Some hopefully harmless soldering.

TPMs contain a set of registers ("Platform Configuration Registers", or PCRs) that are used to track what a system boots. Each time a new event is measured, a cryptographic hash representing that event is passed to the TPM. The TPM appends that hash to the existing value in the PCR, hashes that, and stores the final result in the PCR. This means that while the PCR's value depends on the precise sequence and value of the hashes presented to it, the PCR value alone doesn't tell you what those individual events were. Different PCRs are used to store different event types, but there are still more events than there are PCRs so we can't avoid this problem by simply storing each event separately.

TPMs contain a set of registers ("Platform Configuration Registers", or PCRs) that are used to track what a system boots. Each time a new event is measured, a cryptographic hash representing that event is passed to the TPM. The TPM appends that hash to the existing value in the PCR, hashes that, and stores the final result in the PCR. This means that while the PCR's value depends on the precise sequence and value of the hashes presented to it, the PCR value alone doesn't tell you what those individual events were. Different PCRs are used to store different event types, but there are still more events than there are PCRs so we can't avoid this problem by simply storing each event separately.